Article content

OTTAWA — As the federal government looks to criminalize the distribution of sexualized ‘deepfakes’, advocates are calling for it to go even further and provide a way to order that these images be removed.

THIS CONTENT IS RESERVED FOR SUBSCRIBERS

Enjoy the latest local, national and international news.

- Exclusive articles by Conrad Black, Barbara Kay and others. Plus, special edition NP Platformed and First Reading newsletters and virtual events.

- Unlimited online access to National Post.

- National Post ePaper, an electronic replica of the print edition to view on any device, share and comment on.

- Daily puzzles including the New York Times Crossword.

- Support local journalism.

SUBSCRIBE FOR MORE ARTICLES

Enjoy the latest local, national and international news.

- Exclusive articles by Conrad Black, Barbara Kay and others. Plus, special edition NP Platformed and First Reading newsletters and virtual events.

- Unlimited online access to National Post.

- National Post ePaper, an electronic replica of the print edition to view on any device, share and comment on.

- Daily puzzles including the New York Times Crossword.

- Support local journalism.

REGISTER / SIGN IN TO UNLOCK MORE ARTICLES

Create an account or sign in to continue with your reading experience.

- Access articles from across Canada with one account.

- Share your thoughts and join the conversation in the comments.

- Enjoy additional articles per month.

- Get email updates from your favourite authors.

THIS ARTICLE IS FREE TO READ REGISTER TO UNLOCK.

Create an account or sign in to continue with your reading experience.

- Access articles from across Canada with one account

- Share your thoughts and join the conversation in the comments

- Enjoy additional articles per month

- Get email updates from your favourite authors

Sign In or Create an Account

or

Article content

It comes as the parliamentary justice committee is set to wrap its study this week on Bill C-16, the Liberals latest piece of criminal justice legislation that, among other measures intended to combat gender-based violence, proposes to change the country’s laws against the non-consensual sharing of intimate images to include a “visual representation.”

Article content

Article content

Article content

This language is designed to capture what police, victims’ groups and school boards have been warning for years has been the proliferation of sexual images created by generative artificial intelligence.

Article content

Article content

“They’re missing the point when it comes to victimization and removal,” said Michelle Abel, a vice-president at the National Council of Women of Canada and founder of a non-profit focused on combatting the exploitation of women and children, said of the government bill.

Article content

She points to a law signed into effect last March by U.S. President Donald Trump known as the Take It Down Act. A bi-partisan effort, later championed by First Lady Melania Trump, it targets sexual images shared without a person’s consent, including AI-generated images known as “deepfakes,” and includes the provision that social media giants must remove such content once reported within 48-hours.

Article content

“We need something more immediate like our U.S. counterpart,” Abel says.

Article content

Different provinces have made changes to existing laws that allow victims of non-consensual image sharing known as “revenge porn,” to include those created and altered by AI. Those measures allow victims to launch civil lawsuits against those who share intimate images of them without their permission.

Article content

Article content

In B.C., the province also introduced a service where victims can assess their options, including how to get personal images removed, which could be ordered through a civil resolution tribunal.

Article content

Article content

Still, Suzie Dunn, an associate professor at Dalhousie University whose expertise includes deepfakes, says that process can take weeks or even months before an order is issued.

Article content

“Timing is critical in getting images removed as swiftly as possible,” she said.

Article content

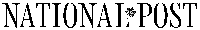

“They proliferated, they’ve been downloaded, they’ve been shared. It’s very difficult to contain an image once it’s been released.”

Article content

That is why Dunn, whose research includes gender-based violence that is facilitated through technology, says the federal government ought to prioritize reintroducing a bill that specifically targets online harms by creating measures that hold platforms accountable for dealing with such images once they are reported.

Article content

She said some websites are more responsive to removal requests, with another complicating factor being operators that exist outside of Canada.

Article content

Dunn pointed to a case in B.C. from last year where X challenged a removal order from B.C.’s tribunal regarding a woman who wanted an altered intimate image of herself removed, after the company blocked it from being viewed within Canada.

.png)

1 hour ago

9

1 hour ago

9

Bengali (BD) ·

Bengali (BD) ·  English (US) ·

English (US) ·